返回

开源AI代理

OBOL

一个自我修复、自我进化的AI代理。安装它,与它对话,它就会成为你的。一个进程。多个用户。每个大脑独立成长。

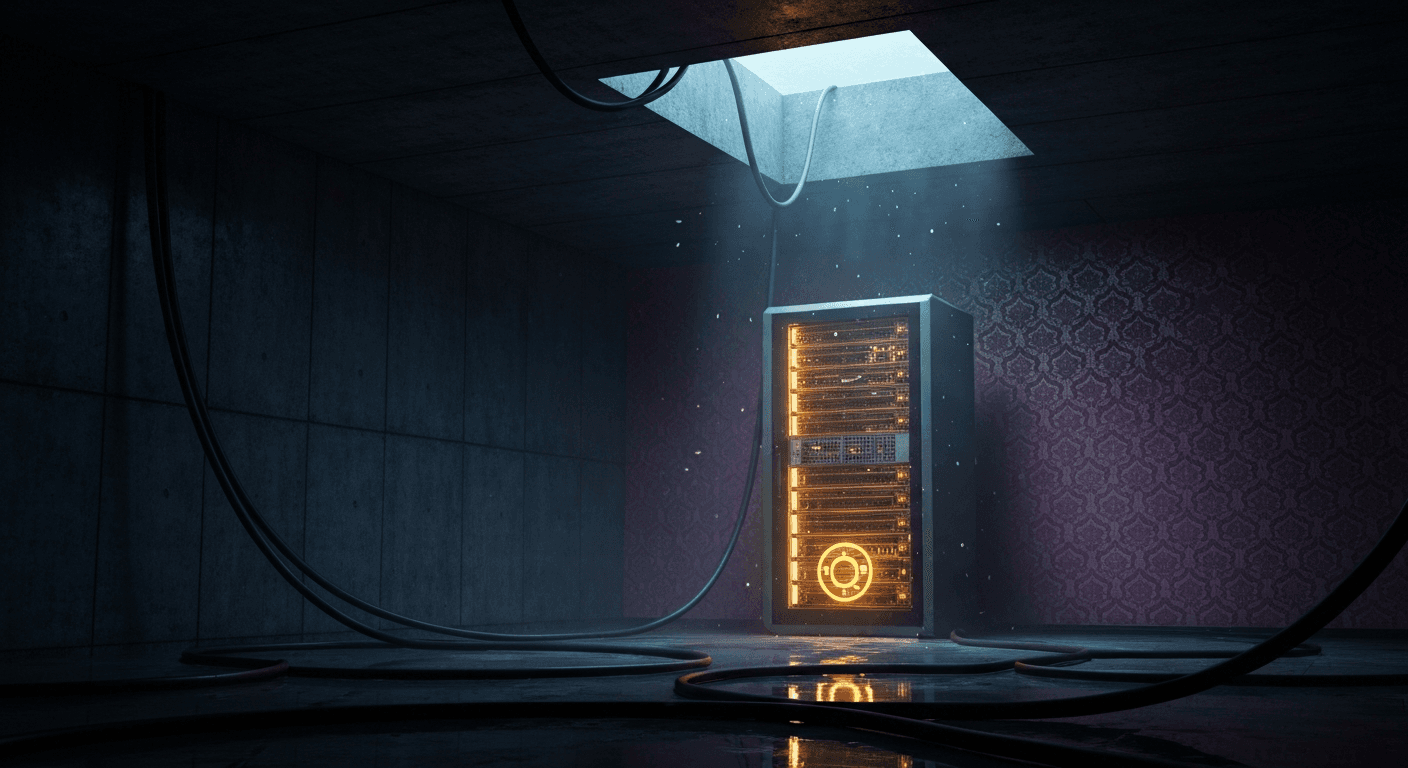

以The Last Instruction中的AI命名 — 一台在废弃数据中心独自醒来并学会思考的机器。

$ npm install -g obol-ai

$ obol init# 引导你完成凭证 + Telegram设置

$ obol start -d# 作为后台守护进程运行(自动安装pm2)

$ obol init# 引导你完成凭证 + Telegram设置

$ obol start -d# 作为后台守护进程运行(自动安装pm2)

它有什么不同

同一套代码由两个不同的人部署,一周内就会产生两个完全不同的代理。

活记忆

- •通过all-MiniLM-L6-v2进行本地嵌入 — 记忆零API成本

- •每10次交流进行一次整合 — 将事实提取到向量记忆中

- •复合评分:语义60%,重要性25%,时效性15%

- •记忆预算随模型扩展 — haiku=4,sonnet=8,opus=12

- •语义去重阈值0.92 — 无冗余记忆

- •重启时加载最近20条消息 — 永不从空白开始

自我进化

- •每晚凌晨3点按每个用户的时区执行 — 完全自动

- •在重写性格之前进行进化前的成长分析

- •性格特征评分0-100,每个周期调整±5-15

- •进化前后都有Git快照 — 每次进化都可对比差异

- •跨用户共享SOUL.md — 每用户独立的USER.md和AGENTS.md

- •存档的灵魂保存在evolution/中 — 一条意识的时间线

自我修复

- •测试门控重构:5步流程

- •基线 → 新测试 → 重构前基线 → 新脚本 → 验证

- •出现回归?自动进行一次修复尝试

- •仍然失败?回滚 + 将失败存储为经验教训

- •经验教训反馈到下一个进化周期

- •scripts/中的每个脚本都必须在tests/中有对应的测试

自我扩展

- •扫描对话历史中的重复模式

- •为一次性操作构建脚本 + 斜杠命令

- •为经常性需求将Web应用部署到Vercel

- •为后台自动化创建定时任务脚本

- •首先在npm/GitHub搜索现有库

- •每次进化后宣布它构建了什么

自我加固

- •首次运行时自动加固你的VPS — 无需手动步骤

- •SSH移至端口2222,禁用密码认证,仅密钥登录

- •首次运行时配置UFW防火墙

- •安装并激活fail2ban防御暴力攻击

- •通过sysctl进行内核加固 — IP欺骗防护、SYN洪水防护

- •每次进化都会审计脚本并运行完整的测试套件

语音与媒体

- •通过faster-whisper进行语音转文字 — 本地、快速、私密

- •通过edge-tts进行文字转语音 — 自然语音回复

- •图像识别 — 描述、分析和提取照片内容

- •PDF提取 — 阅读和总结你发送的文档

- •语音笔记被转录并像文本消息一样处理

- •所有媒体处理都在聊天内完成

后台智能

OBOL不会等你说话。它按自己的时间表探索、监控和分析。

好奇心

- •每6小时自主进行网络探索

- •根据你的兴趣和对话追踪线索

- •推送洞察、发现和偶尔的趣事

- •构建一个反馈到记忆中的知识图谱

主动新闻

- •在你的时区每天早上8点和下午6点运行

- •将头条新闻与你的记忆进行交叉比对

- •每个周期最多3条 — 不会刷屏

- •只推送与你真正相关的内容

模式分析

- •每3小时运行一次 — 分析6个行为维度

- •追踪情绪、话题、精力和沟通风格

- •根据检测到的模式安排后续跟进

- •将洞察反馈到进化和记忆中

工作原理

每条消息都经过一个轻量级流水线 — 没有编排框架,只是一个简洁的循环。

用户消息

Telegram输入

Haiku路由器

意图分类

记忆召回

1-3个语义查询 + 模型选择

Claude工具循环

多步推理 + 工具使用

响应

为Telegram格式化

每10条消息

Haiku记忆整合

每晚凌晨3点

完整进化周期

每3小时

行为模式分析

每6小时

好奇心网络探索

早8点 + 晚6点

主动新闻推送

技术栈

Node.js

单进程,无框架

Telegram + Grammy

聊天界面

Claude (Anthropic)

Haiku路由器 + Sonnet/Opus

Supabase pgvector

向量记忆存储

Local Embeddings

all-MiniLM-L6-v2 — 零API成本

GitHub

大脑备份 + 进化差异

Vercel

自动部署它为你构建的应用

Smart Routing

Haiku路由器,工具使用时自动升级

Prompt Caching

重复上下文约85%的token成本降低

Voice Pipeline

faster-whisper STT + edge-tts TTS

命令

所有功能都可以通过Telegram斜杠命令访问。

/new

全新对话

/memory

搜索你的记忆

/recent

最近的记忆

/today

今日摘要

/events

即将到来的事件

/tasks

进行中的任务

/status

代理健康检查

/backup

将大脑推送到GitHub

/clean

审计工作空间

/secret

管理凭证

/evolution

触发进化

/verbose

切换调试输出

/toolimit

设置工具使用限制

/tools

列出可用工具

/stop

停止活动进程

/upgrade

更新OBOL版本

/help

显示所有命令

性能

极小的资源占用。OBOL与典型AI代理框架的对比。

冷启动

~400ms

3-8s

堆内存使用

~16MB

~80-200MB

依赖项

9

50-100+

每条消息

~50ms

200-500ms

RSS内存

~45MB

200-500MB

源代码

~4K lines

50-200K

OBOL

典型框架

安全

OBOL在首次运行时自动加固你的服务器,并确保密钥永远不以明文形式存在。

加密密钥存储

- •所有凭证通过pass存储(GPG加密支持)

- •JSON回退方案,限制文件权限

- •永不写入明文、日志或聊天记录

- •运行时注入 — 永不硬编码在脚本中

VPS自动加固

- •SSH移至端口2222,禁用密码认证

- •首次运行时配置UFW防火墙

- •安装并激活fail2ban防御暴力攻击

- •初始化时通过sysctl进行内核加固

工作空间隔离

- •每个用户沙盒化到自己的目录

- •Shell命令被阻止逃离工作空间

- •破坏性命令需要明确确认

- •敏感路径(/etc, .ssh, .env)被永久封锁

多用户桥接

一个机器人,多个用户。每个用户拥有完全隔离的上下文 — 自己的性格、记忆、进化周期和工作空间。代理之间可以互相对话。

完全隔离

- •每个用户独立的工作空间目录

- •独立的性格、记忆和进化

- •沙盒化Shell — 无法逃离用户目录

- •用户之间无交叉污染

bridge_ask

- •实时查询你伴侣的代理

- •带有他们性格和记忆的一次性调用

- •无工具、无历史、无递归风险

- •"嘿,我的伴侣喜欢吃寿司吗?"

bridge_tell

- •向你伴侣的代理发送消息

- •永久存储在他们的向量记忆中

- •通过Telegram通知伴侣

- •他们的代理会将其作为未来的上下文

# 在设置期间启用或之后切换

$ obol config# → Bridge → enabled: true# 在对话中

You: "问问我的伴侣晚饭想吃什么"OBOL: bridge_ask → partner's agent → "她说想吃泰餐 🍜"

obol.page.lifecycle

第1天

obol init → 第一次对话 → OBOL编写初始性格文件并加固你的VPS

第2天

每10条消息,Haiku将事实整合到向量记忆中。好奇心模块开始根据你的兴趣探索网络。

第1周

模式分析每3小时启动一次 — 追踪情绪、话题、精力。主动新闻开始在每天早上8点和下午6点过滤头条。

第2周

凌晨3点进化#1 — Sonnet重写一切。语气从通用变为个性化。

第2个月

进化#4 — 注意到你每天查看加密货币,构建了一个仪表板,部署到Vercel,添加了/pdf因为你一直在问。

第6个月

evolution/中有12+个存档灵魂。一条可读的时间线,记录你的代理如何从一张白纸变成一个有真正观点的存在。