我不会任何乐器。八岁的时候学过钢琴,三个月后就放弃了,因为我想出去玩。再也没碰过。看不懂乐谱。你问我一首歌是什么调,我完全答不上来。

上周末我做了个东西,让我可以在聊天框里打字来创作音乐。

它是什么

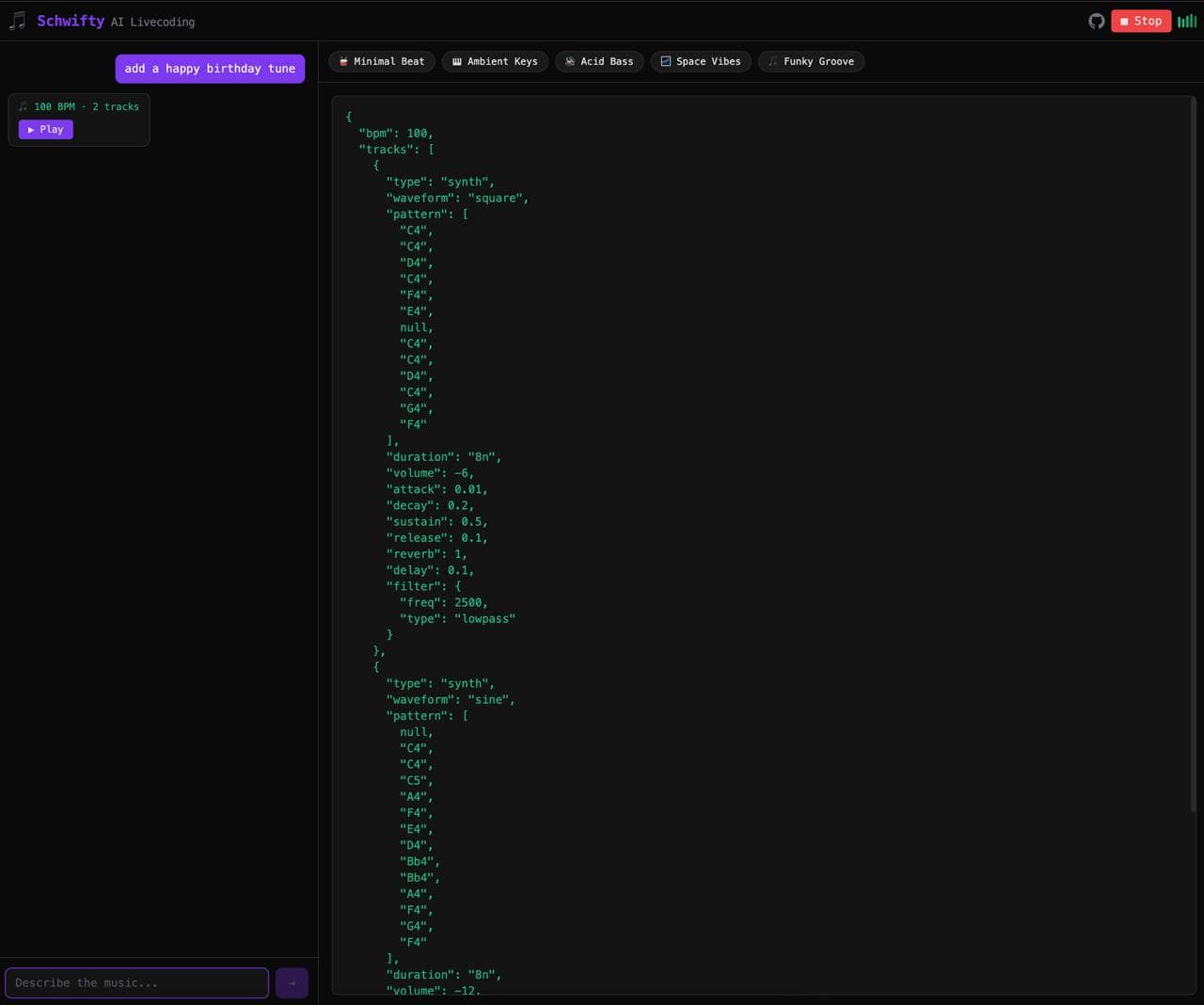

Schwifty 是一个浏览器应用。你输入想听的内容。"暗黑 techno 加 acid 低音。""有演化纹理的 ambient drone。""听起来像迷失在太空站里的感觉。"AI 把这些转化成实时代码,通过你的扬声器播放出来。不用 DAW。不用插件。不用安装。你打字,它就播放。

名字来自 Rick and Morty 的梗。显然。

它到底怎么运作的

秘密武器是 Strudel,TidalCycles 的 JavaScript 移植版。TidalCycles 是一门用于算法音乐的 livecoding 语言,从 2000 年代初期就存在了。人们用它做现场演出,在台上敲代码,观众看着音乐模式实时变化。它完全在浏览器中通过 Web Audio API 运行。

Strudel 的问题和所有 livecoding 语言一样:你得先学会它。语法很强大,但如果你从没见过,完全不直观。比如这样的东西:

note("<[c2,g2] [d2,a2] [e2,b2] [f2,c3]>").s('triangle').superimpose(add(.03)).cutoff(sine.slow(12).range(200,1500)).room(.95)

这是一段 ambient drone。听起来很美。但除非你花几个周末读文档,否则你永远不会知道怎么写出来。

Schwifty 跳过了这一切。GPT-4o 有一个很长的系统提示词,涵盖了 Strudel 语法:音符、采样、效果、欧几里得节奏、滤波器,应有尽有。你说"有演化纹理的 ambient drone",它就生成上面那段代码。代码在沙盒 iframe 中运行,Strudel 解析它,Web Audio 播放它。你就听到了音乐。

让我意外的部分

我以为 AI 会生成一些基础的循环。简单的底鼓-踩镲节奏,也许偶尔一个低音音符。能用但无聊。

结果它生成了带有分层复合节奏、滤波器扫频、跨小节延展的混响尾音、相位失谐振荡器的东西。我输入"听起来像东京一个下雨的夜晚",得到了一段有柔和 FM 钟声、低音量的 shuffle 踩镲模式、以及像远处雷声一样脉动的低音的作品。我都不知道 Strudel 能做到其中一半。

迭代的部分才是真正有趣的地方。你不只有一次机会。你说"快一点。""加更多低音。""搞怪一点。""把除了踩镲以外的全部去掉四个小节,然后全部带回来。"每个提示都会修改正在运行的代码。这不太像在写提示词,更像在指挥一个碰巧几毫秒就能响应的乐手。

有天晚上我花了三个小时纯粹在打提示词和听。忘了我本来应该是在做开发。

预设

不是每个人都想打字。所以有五个一键预设来展示 Schwifty 的能力:Minimal Beat、Acid Bass、Space Vibes、Ambient Pad、Glitch Hop。点击一个,音频开始,代码出现在屏幕右侧。你可以一边听一边读代码,开始了解 Strudel 模式是怎么运作的。

无意中有了教育意义。这也不在计划里。

这说明了 AI 和创造力的什么

我不断在构建这些 AI 让我惊讶的东西。在 Latent Press 里,它自己选了小说的前提。在 Schwifty 里,它生成了我无法用技术术语来描述需求的音乐。我说"搞怪一点",它就加上欧几里得节奏和我根本不知道存在于 Strudel 采样库中的 bitcrush 采样。

这个争论有一种说法是 AI 只是在重新混合训练数据。统计上最可能的音符序列。这可能是对的。但当我听到像"提前离开派对的感觉"这样的提示词生成的东西——一段缓慢下行的旋律铺在一个逐渐失去高频的闷四拍节奏上——我已经不太在乎哲学辩论了。它听起来就是对的。

"我想听到什么"和"我正在听到它"之间的鸿沟曾经需要多年的练习。现在只需要一句话。这是民主化还是贬值,取决于你站在乐器的哪一边。

试试看

点击底部的"Start Audio Engine"。输入点什么。看看会发生什么。

代码开源在 github.com/meeseeks-lab/schwifty。这是一个 Next.js 应用,有一个 OpenAI API 调用和一个 Strudel iframe。整个东西大概就 500 行实际代码。

有时候最简单的东西做起来最有意思。

Stay Updated

Get notified about new posts on automation, productivity tips, indie hacking, and web3.

No spam, ever. Unsubscribe anytime.