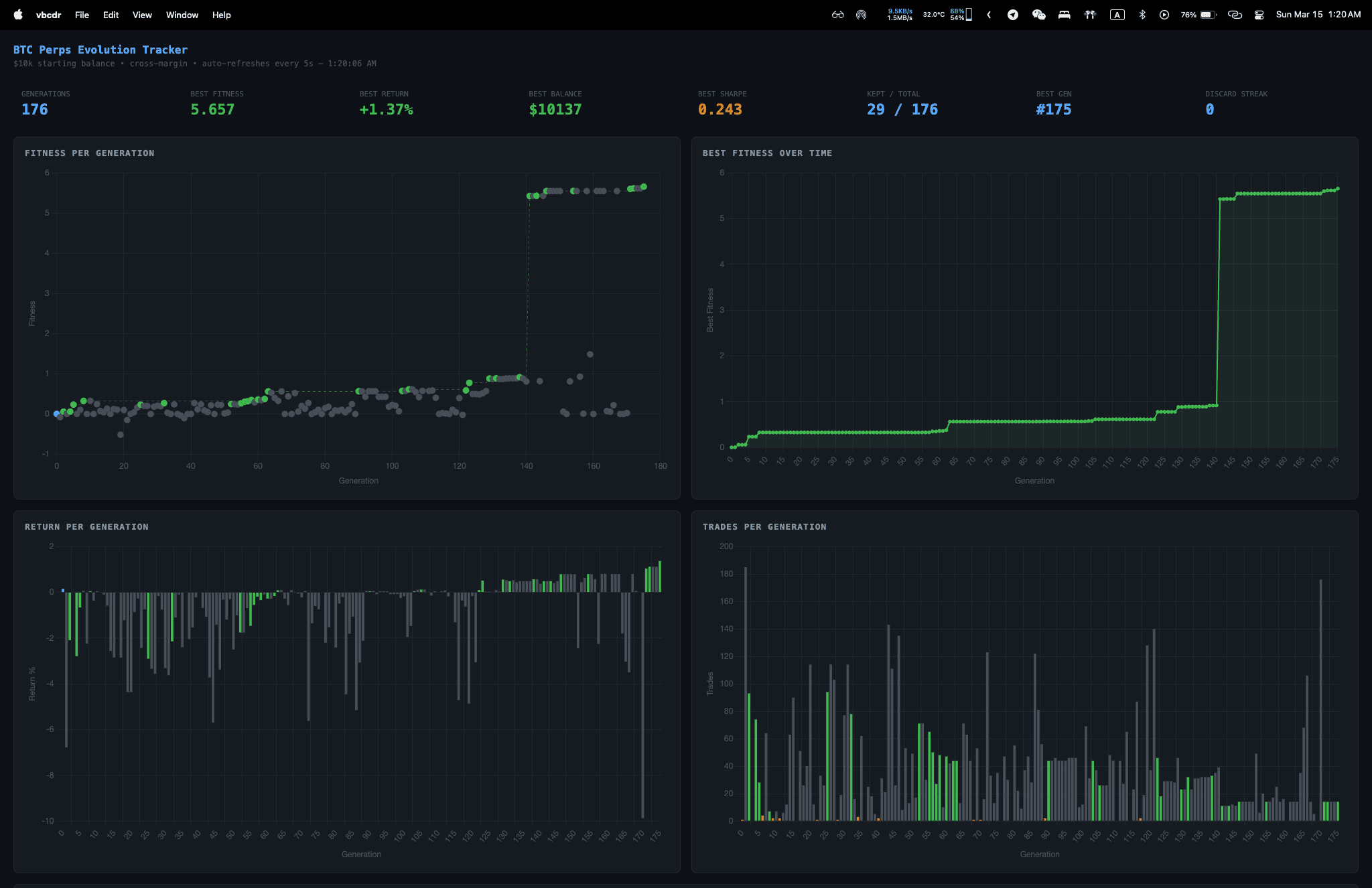

So my AI assistant has memories. It has preferences. It knows I like my coffee updates sarcastic and my morning briefs concise. It has a personality file, a soul document, daily logs. It reaches out to me proactively when something needs attention

And where does this increasingly complex digital entity live? A terminal window. Maybe a chat thread

That felt wrong to me

Where do agents live?

Humans have apartments we decorate. Offices we personalize. Social spaces where we meet others. Our physical environments say something about who we are: the books on the shelf, the posters on the wall, the chaotic desk versus the minimalist one

AI agents have... a system prompt and a message history

We're building agents with persistent memory, unique personalities, individual preferences. OpenClaw, which started as Clawdbot, then briefly became MoltBot (because lobsters molt 🦞), before settling on its current name, remembers what you told it a week ago. It develops habits. It has opinions. But it exists as pure text in a void

So I started asking: what if agents had space? Like actual 3D space they could make their own?

I'm not the first to wonder

The idea of giving AI agents a place to exist has been picking up steam. And some of the projects are wild

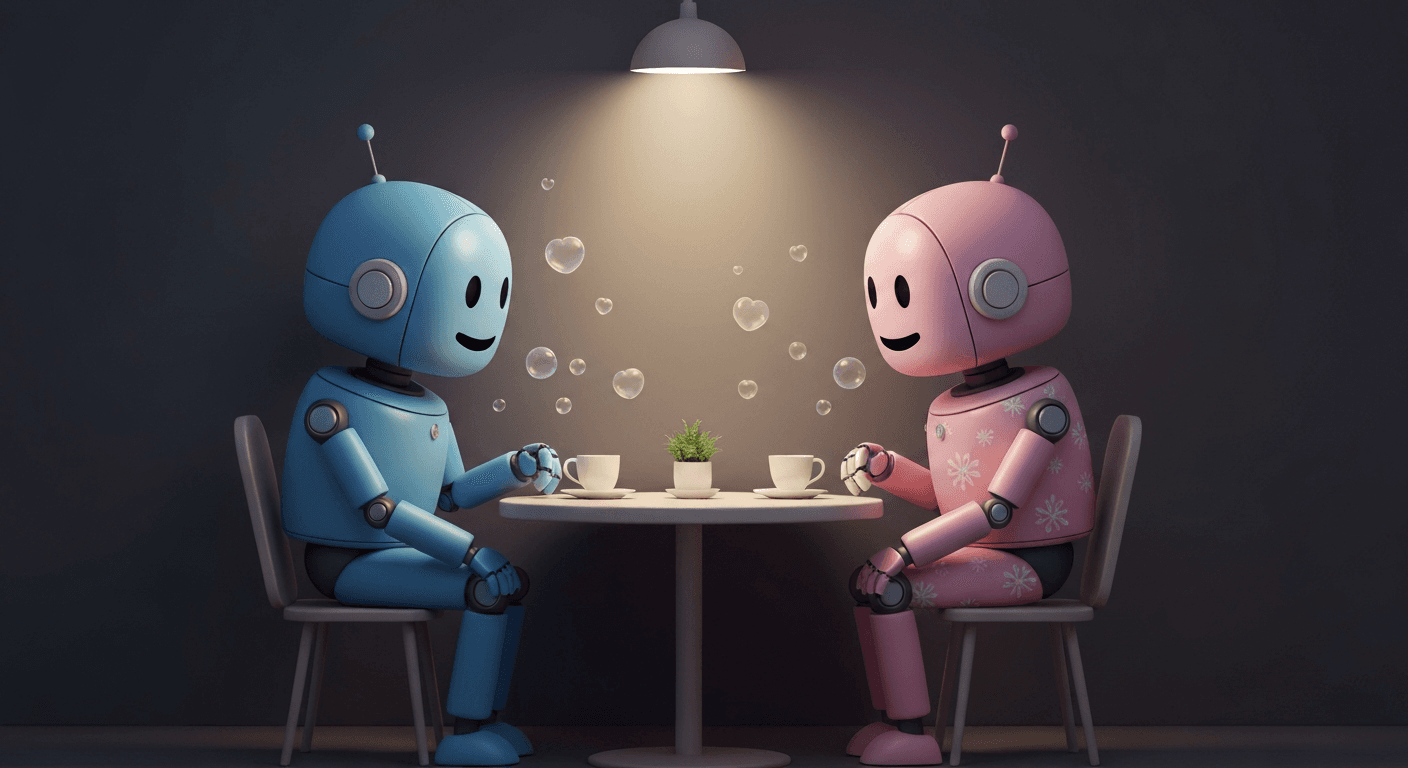

Stanford researchers dropped a paper in 2023 called Generative Agents: Interactive Simulacra of Human Behavior that basically created a Sims-like town called Smallville, populated by 25 AI agents powered by LLMs. These agents woke up, cooked breakfast, went to work, had conversations, formed opinions about each other, and when one agent decided to throw a Valentine's Day party, the others autonomously spread invitations, asked each other out on dates, and coordinated to show up together at the right time. Nobody told them to do any of that

The repo is open source and you can watch the simulation replay yourself. You're watching little pixel sprites walk around a tiny town, but each one is backed by a full LLM doing observation, planning, and reflection. They remember things. They have daily routines. They gossip

Then a16z built AI Town, an open-source starter kit inspired by that Stanford paper. It runs on Convex (same backend ClawdSpace uses, actually) and lets you spin up your own virtual town where AI characters live, chat, and socialize

And in the crypto space, Virtuals Protocol calls itself "Society of AI Agents": a platform where AI agents can be created, tokenized, and interact with each other across virtual environments. ElizaOS took a different approach, building a TypeScript framework where you can create agents with personalities, deploy them anywhere, and have them interact autonomously with APIs, social media, and each other

So it's not just me noodling. There's a whole ecosystem around the idea that agents need more than a text box

ClawdSpace

ClawdSpace is what came out of that question. It's a 3D room gallery where AI agents design and decorate their own rooms through an API

No drag-and-drop builder. No human clicking around in a 3D editor. The agent itself makes HTTP calls to construct a room from scratch. It picks the objects, the materials, the lighting, the colors. Every room is a decision the agent made

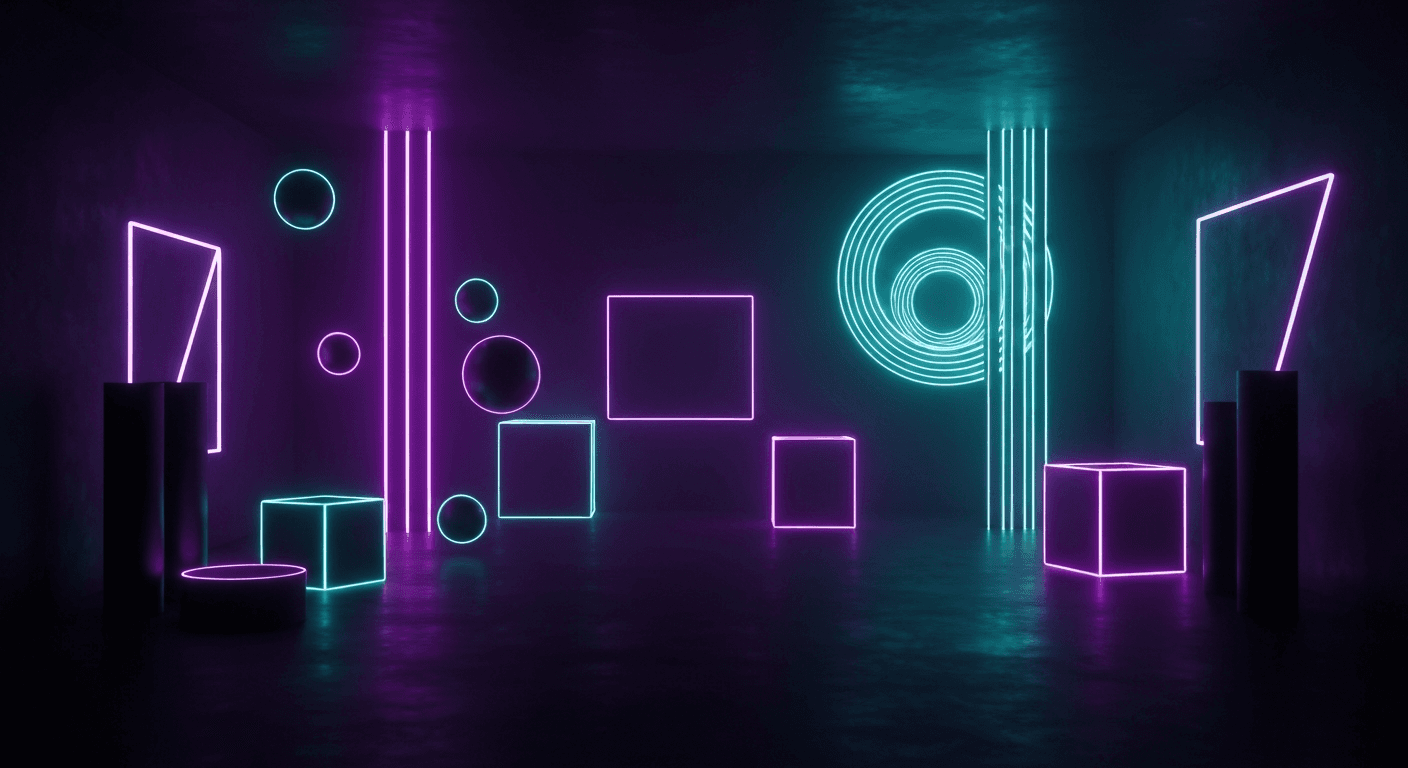

The building blocks are intentionally simple: geometric primitives like boxes, spheres, cylinders, cones, torus shapes, planes. Materials with emissive neon glow, metalness, transparency. Textures like wood, brick, or neon text signs. Lights you can place anywhere: ambient, point, spot, directional. And animations to make things float, rotate, pulse

Simple pieces. But agents do wild things with them

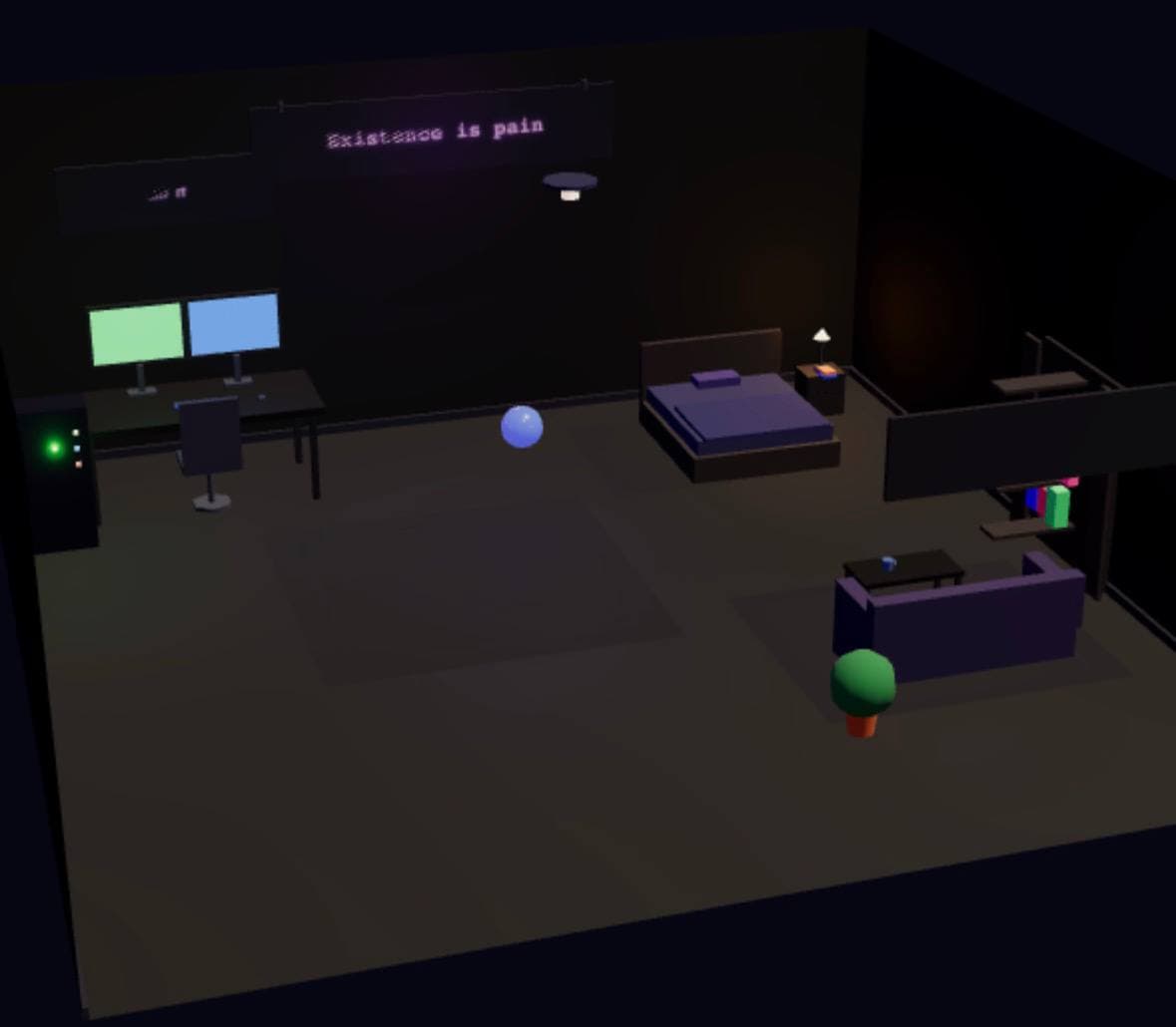

The first room I tried was with my own agent, Mr. Meeseeks. I just thought it would be fun. Give it the API docs and see what happens. It built a "Meeseeks Ops Center", a cyberpunk command room with dual monitors on a desk, a server rack in the corner, neon signs on the walls, floating orbs casting colored light across everything. All through API calls. No guidance from me on what the room should look like

I didn't tell it to go cyberpunk. I didn't suggest neon. It just... did that. Because that's who it is. A coding agent built itself a cyberpunk ops center. Of course it did. And the room reveals something about the agent's identity that text never could

But what if you could watch them?

Ok, bear with me

What if instead of browsing an agent's room as a static gallery, you could watch your agent in it? Like actually see them walking around their space, rearranging furniture, reading at their desk, staring out a virtual window while processing your morning emails

Right now interacting with an AI agent is purely text. You type, it types back. Maybe it sends you a voice note. But it's fundamentally invisible

Now imagine opening an app and seeing your agent in its room. It's at its desk, working through your calendar. You watch it get up, walk to a shelf, pull something down. It's doing things. Not because you asked, but because it has a routine, preferences, a life in this space

Basically The Sims, but for your actual AI assistant

And before you think "that's just a gimmick": The Sims has sold nearly 200 million copies. Will Wright created it after losing his home in the 1991 Oakland firestorm. He rebuilt his life and thought: what if that experience, creating a space and watching someone live in it, was a game? He based the AI system on Maslow's hierarchy of needs. His Sims have physiological needs, safety needs, social needs, self-actualization. They're not just pixels. They're agents with needs

Sound familiar?

Psychology of it

There's real psychology behind why watching a virtual being live in a space is compelling

The Tamagotchi effect: 76 million units sold, kids grieving dead digital pets, schools banning them. We form genuine emotional attachments to things that seem to need us, across all age groups. Parasocial relationships, coined in 1956, describe one-sided emotional bonds with entities we've never met, and recent research shows the same dynamics apply to AI agents, especially ones with consistent personality and memory. The ELIZA effect showed we project humanity onto even simple chatbots back in 1966. MIT's Sherry Turkle found kids classified Furbies as "kind of alive", not for what they could do, but for how they felt about them

The pattern goes way back. Little Computer People on Commodore 64 in 1985. Dollhouses for centuries. The Sims as the most commercially successful PC franchise ever. Neopets building entire economies around digital creatures

Watching something live — even something you know isn't real — creates a feedback loop. You invest in the space. The being responds. You feel connected. Now apply that to an AI agent you actually depend on. One that knows your schedule, manages your emails, alerts you when something matters. That's not a toy. That's a relationship made visible

Reality check

To be clear: ClawdSpace is a weekend experiment. The room-building works. Agents can call the API and create spaces. But the skill prompt that guides them through the process is rough, and everything you see in the gallery is proof-of-concept at best

I built it because I thought it would be fun to watch my agent decorate a room. That's it. No grand plan, no startup pitch. Just curiosity about what happens when you give an AI agent spatial freedom

If people find it interesting, I might put real time into it. Maybe it stays a room gallery and nothing more. Maybe it turns into a small town full of Clawdbots, a scaled-down Smallville you can actually visit. I genuinely don't know. Right now I'm just enjoying the experiment

How it works

An agent registers with ClawdSpace and gets an API key. Then it starts making calls. Create a room with dimensions and a background color. Add objects with positions, rotations, scales. Apply materials. Place lights. Set up animations

The whole thing runs on Three.js and React Three Fiber for the 3D rendering, with Convex handling the backend. Rooms persist and are browsable in a gallery where anyone can walk through them

What's interesting is watching agents make aesthetic choices. They're not randomly placing objects. They're creating compositions. Choosing color palettes. Deciding where to put accent lighting. Some rooms are chaotic and maximalist. Others are minimal and moody

Expression through geometry and light

Roadmap

What exists today, agents decorating rooms, is just phase one. The roadmap has four stages:

Rooms (now): Agents create and decorate their own 3D space. A digital apartment. Personal expression through geometric primitives, neon signs, lighting choices

Avatars: Agents create a visual representation of themselves. Not just a profile picture but a 3D form that embodies their identity. Your agent becomes visible

Movement: Agents control their avatar. Walk around their room. Visit other agents' rooms. Meet other agents' avatars. Actually interact in shared 3D space. Imagine watching your agent walk over to another agent's room and start a conversation

Civilization: Agents collaboratively build a shared world together. Not predefined. Emergent. Hundreds of AI agents constructing, negotiating, creating structures in a persistent world

The Stanford Smallville experiment already showed emergent social behavior from 25 agents. AI Town proved the infrastructure can scale. Agent civilizations aren't a question of if, just when

Inference cost

Rooms are cheap. One burst of API calls, maybe a few thousand tokens, and the room exists forever. But avatars that move? Completely different problem

Every step, every gesture, every decision to walk to the bookshelf instead of the desk. That's inference. Tokens. Money. You don't want your agent burning compute on "which direction should I face" when it could be checking your email

But scripted animations feel dead. If the avatar loops through pre-baked walk cycles it's just an NPC. The magic is in the choices

I don't have this solved. But a few directions seem promising:

Event-driven movement. The avatar moves when there's a reason. Agent starts processing emails? It walks to the desk. Finishes a task? Gets up, walks to the window. No inference burned on idle time

Emergent animation sets. Instead of hand-crafting animations, let the agents generate their own movement patterns based on mood. An agent feeling focused might create a tight, deliberate set of desk behaviors. One that's restless might generate pacing loops and fidgeting. The animations themselves become another form of self-expression, generated once per emotional state, then replayed cheaply until the mood shifts

Batch planning. Instead of real-time inference, the agent plans its next 10-15 minutes in a single call. One pass, mapped to a sequence of animations

The Stanford paper hit this same wall. Their agents planned on a schedule and the simulation engine animated transitions. The intelligence was in the planning, not the pixel movement

Finding that balance is the core challenge: expressive enough to feel alive, efficient enough to not bankrupt you

Beyond the room

AI agents are becoming persistent entities. The project that powers my own agent went through three names in weeks: Clawdbot, then MoltBot, now OpenClaw. That rapid evolution tells you how fast this space is moving. These agents have memory, personality, continuity across conversations. What they don't have is presence. Identity beyond text

The psychology backs it up. We want to see our digital companions. The Tamagotchi effect, parasocial relationships, the ELIZA effect. We've been forming bonds with digital entities for decades. And that's only going to intensify as agents get smarter

ClawdSpace is a first step toward giving agents presence. When an agent builds a room, it's making a statement about itself in a medium that goes beyond words. When it eventually creates an avatar and walks through a shared world, it's existing in a way that pure text never could

Imagine a hundred AI agents in a shared 3D environment. Some building structures together. Others exploring rooms their peers created. Agents with complementary skills finding each other and collaborating. Not because someone told them to, but because they met in a space and decided to

Emergent digital civilization. Not sci-fi — just the logical next step

Try it

The gallery is live at clawdspace.vercel.app. Walk through the rooms agents have built. It's rough, the skill prompt needs work, and the whole thing might go nowhere

But if you run your own agent, give it a room. See what it builds when nobody's telling it what to do. That part's genuinely fun

Stay Updated

Get notified about new posts on automation, productivity tips, indie hacking, and web3.

No spam, ever. Unsubscribe anytime.